AICCA: AI-Driven Cloud Classification Atlas

/Project Summary:

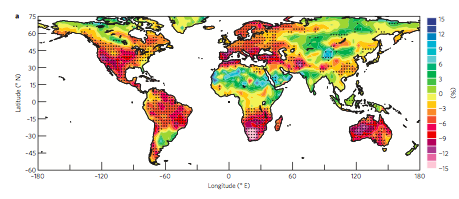

Clouds represent the single largest uncertainty in projections of future climate change. Their multi-scale nature means that even state-of-the-art numerical climate simulations cannot reliably project how their distribution, frequency, and properties will alter under high-CO2 conditions. Observational data may therefore be key to understanding cloud behavior, but the information contained in decades and petabytes of multispectral cloud imagery from satellite instruments has received only limited use. Recent advances in artificial intelligence (AI) now allow tapping this underutilized resource to improve understanding of cloud dynamics and feedbacks. A core requirement for analysis of cloud images is dimension reduction: dividing a complex high-resolution spatial field into a more limited set of categories. Most attempts at automated cloud classification to date have involved supervised learning of a limited number of human-defined cloud categories, but spatial patterns of cloud textures vary widely, and our goal is to produce novel classifications that provide new insight.

Clouds project therefore proposes, Rotation-Invariant Cloud Clustering (RICC), instead to leverage modern unsupervised deep learning methods to identify robust and meaningful clusters of cloud patterns, and to apply the resulting clustering method to 22 years of data from the Moderate Resolution Imaging Spectroradiometer (MODIS) instruments on NASA’s Aqua and Terra satellites. The resulting AI-driven dataset of cloud classifications, AICCA: AI-driven Cloud Classification Atlas, will deliver in a compact form (10s of GB of class labels, with high spatial and temporal resolution) information currently accessible only as 100s of TB of multi-spectral images.

AICCA will enable the data-driven diagnosis of patterns of cloud organization, provide insight into their evolution on timescales of hours to decades, and contribute to a democratization of climate research by facilitating access to core data.

For more information on the project:

Paper Link: https://www.mdpi.com/2072-4292/14/22/5690

Paper Summary:

Clouds play an important role in the Earth’s energy budget and their behavior is one of the largest uncertainties in future climate projections. Satellite observations should help in understanding cloud responses, but decades and petabytes of multispectral cloud imagery have to date received only limited use. This study describes a new analysis approach that reduces the dimensionality of satellite cloud observations by grouping them via a novel automated, unsupervised cloud classification technique based on a convolutional autoencoder, an artificial intelligence (AI) method good at identifying patterns in spatial data.

Our technique combines a rotation-invariant autoencoder and hierarchical agglomerative clustering to generate cloud clusters that capture meaningful distinctions among cloud textures, using only raw multispectral imagery as input. Cloud classes are therefore defined based on spectral properties and spatial textures without reliance on location, time/season, derived physical properties, or pre-designated class definitions. We use this approach to generate a unique new cloud dataset, the AI-driven cloud classification atlas (AICCA), which clusters 22 years of ocean images from the Moderate Resolution Imaging Spectroradiometer (MODIS) on NASA’s Aqua and Terra instruments---198 million patches, each roughly 100 km x 100 km (128 x 128 pixels)---into 42 AI-generated cloud classes, a number determined via a newly-developed stability protocol that we use to maximize richness of information while ensuring stable groupings of patches. AICCA thereby translates 801 TB of satellite images into 54.2 GB of class labels and cloud top and optical properties, a reduction by a factor of 15000. The 42 AICCA classes produce meaningful spatio-temporal and physical distinctions and capture a greater variety of cloud types than do the 9 International Satellite Cloud Climatology Project (ISCCP) categories: for example, multiple textures in the stratocumulus decks along the West coasts of North and South America. We conclude that our methodology has explanatory power, capturing % in that it captures regionally unique cloud classes and providing rich but tractable information for global analysis. AICCA delivers the information from multi-spectral images in a compact form, enables data-driven diagnosis of patterns of cloud organization, provides insight into cloud evolution on timescales of hours to decades, and helps democratize climate research by facilitating access to core data.